State of IP Spoofing

These charts show spoofing results with different kinds of aggregation.

They use only the most recent test from each client IP address, and

only tests run within the last year. Because the large majority of

tests occur from behind a NAT, the results are separated into tests

with no NAT involved, and all tests (with and without NAT). Tests that

couldn't evaluate whether spoofing or blocking occur are excluded.

The remaining tests are first aggregated in IP blocks (/24 for IPv4, and /40

for IPv6). Blocks in which all tested client addresses result in the

same status are labeled as "spoofable" or "unspoofable", and blocks

with conflicting results from different IP addresses are labeled

"inconsistent".

A similar analysis is done on the AS level, but the "inconsistent"

ASes are further subdivided into those with less than half their IP

blocks considered spoofable (which are labeled "partly spoofable") and

those with at least half spoofable (which are labeled "mostly

spoofable").

| Status | Count |

|---|

| Spoofable | 215 |

| Inconsistent | 1 |

| Blocked | 1160 |

|

| Status | Count |

|---|

| Spoofable | 126 |

| Mostly spoofable | 16 |

| Partly spoofable | 31 |

| Blocked | 504 |

|

| Status | Count |

|---|

| Spoofable | 1110 |

| Inconsistent | 2 |

| NAT Blocked | 10604 |

| Blocked | 1156 |

|

| Status | Count |

|---|

| Spoofable | 506 |

| Mostly spoofable | 20 |

| Partly spoofable | 65 |

| NAT Blocked | 1462 |

| Blocked | 468 |

|

| Status | Count |

|---|

| Spoofable | 284 |

| Inconsistent | 37 |

| Blocked | 1506 |

|

| Status | Count |

|---|

| Spoofable | 178 |

| Mostly spoofable | 34 |

| Partly spoofable | 33 |

| Blocked | 556 |

|

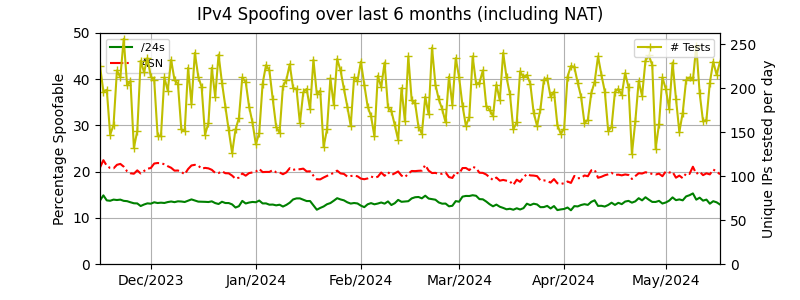

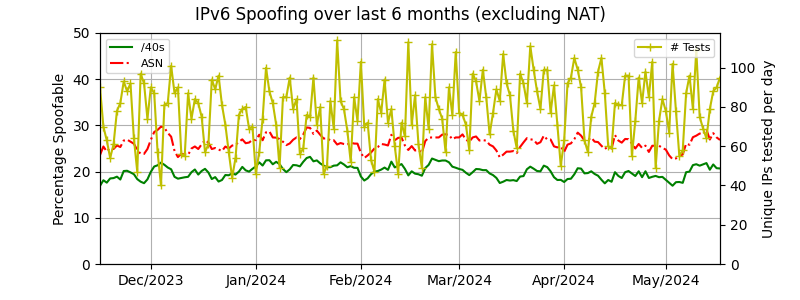

| Summary of observed spoofing over last 6 months

|

These graphs plot the spoofability of the IP blocks and ASes

that we have observed

over the last 6 months, at a granularity of 1 day. In order to

prevent visual clutter, all tests since 1 week

before the specified date are included in the spoofability calculation,

and all the "inconsistent" prefixes or ASes are considered

to be "spoofable". Tests that

couldn't evaluate whether spoofing or blocking occur are excluded.

See the graph for the lifetime of spoofer

| Top Ten Spoofer Test Results (for the last year)

|

We assess the geographic distribution of clients seen in the last year

both to measure the extent of our testing coverage as

well as to determine if any region of the world is more susceptible to

spoofing. The value shown is the percentage of tested IP blocks (including

those behind a NAT) that show any evidence of spoofing.

| Source address filtering:

|

|

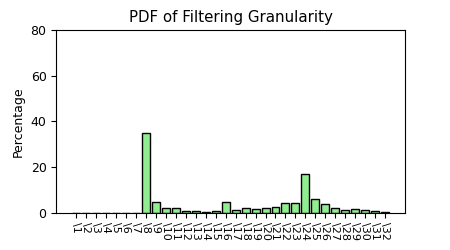

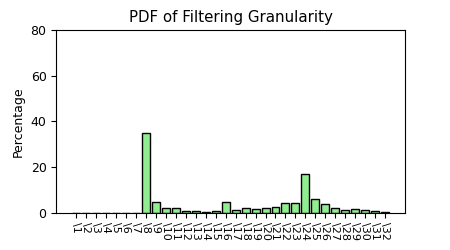

Each test run spoofs addresses from adjacent netblocks, beginning with

a direct neighbor (IP address + 1) all the way to an adjacent /8.

The following figure displays the granularity of source address filtering

(typically employed by service providers) along paths tested in our study. If

the filtering is occurring on a /8 boundary for instance, a client within that

network is able to spoof 16,777,215 other addresses.

|

Using the tracefilter mechanism, we measure

filtering depth; where along the tested path (from each client to the server),

filtering is employed. Depth represents the number of IP routers through

which the client can spoof before being filtered.

|

|

|

This report, provided by

CAIDA,

intends to provide a current aggregate view of ingress and egress

filtering and IP Spoofing on the Internet. While the data in this report

is the most comprehensive of its type we are aware of, it is still an

ongoing, incomplete project. The data here is representative

only of the netblocks, addresses and autonomous systems (ASes) of clients

from which we have received reports. The more client reports we receive

the better - they increase our accuracy and coverage.

Download and run our

testing software

to automatically contribute a report to our database.

Feedback, comments and bug fixes welcome; contact spoofer-info at caida.org.